Understanding the core of the transformer is essential for anyone interested in modern machine learning. Transformers have revolutionized how we process language, images, and more. The architecture relies on a mechanism that captures relationships within data. However, many struggle to grasp this core concept fully.

The transformer consists of several components, each playing a vital role. At its heart lies the attention mechanism. It allows the model to weigh the importance of different inputs. This can be challenging to visualize and comprehend. Many learners spend hours trying to understand how attention affects outcomes.

Common pitfalls include focusing too much on technical jargon. It’s easy to get lost in the complexity. Yet, understanding the core of the transformer is not just for experts. Beginners need to appreciate its function and impact. This knowledge can enhance their skills and open new opportunities. Reflection on these aspects is crucial for effective learning.

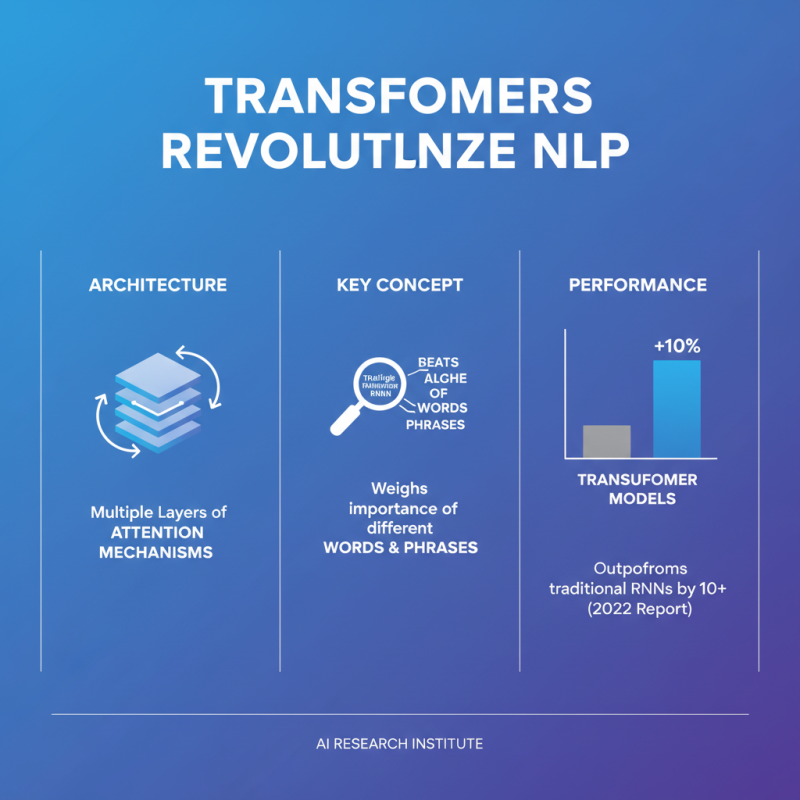

Transformers have revolutionized the field of Natural Language Processing (NLP). They rely on an architecture that includes multiple layers of attention mechanisms. This allows the model to weigh the importance of different words and phrases in a sentence. A 2022 report by the AI Research Institute highlighted that transformer models outperform traditional RNN architectures by more than 10% in various benchmarks.

Understanding the components of a transformer is crucial. The primary components are the encoder and decoder. Encoders process the input data and extract useful features. In contrast, decoders generate the output sequences. This structure enables transformers to handle complex tasks, like translation and summarization, effectively.

Tip: Focus on the self-attention mechanism. It allows the model to consider the context of words around a given input. This is particularly important when dealing with ambiguous language, where word meaning depends on surrounding terms.

Another essential aspect is the concept of positional encoding. Since transformers lack recurrent connections, they use positional encodings to maintain the sequence information within the input data. This clever trick ensures that the model understands the order of words, which is key when generating coherent text.

Tip: Experiment with various model parameters. Fine-tuning will help achieve the best results for specific tasks. Each model configuration has strengths and weaknesses, and exploring these is necessary for optimal performance.

Understanding the core of the Transformer model requires a deep dive into its attention mechanisms and self-attention. These concepts lie at the heart of how Transformers process information. Attention mechanisms allow models to focus on relevant parts of the data. In contrast, self-attention enables models to weigh the significance of different elements within the same input. This leads to better performance in various tasks.

One remarkable statistic highlights the impact of self-attention: models utilizing these mechanisms have shown a 10-30% improvement in accuracy on natural language processing tasks. Understanding how these mechanisms work is vital. Here are a few tips for grasping their essence.

Visualize attention scores as weights. This helps illustrate how different words contribute to the meaning of a sentence. Another tip: experiment with small datasets to observe how attention changes. This hands-on approach fosters a deeper understanding. Learning the intricacies of self-attention can be complex. Mistakes often lead to confusion about relationships within the data. Reflecting on these challenges is crucial for growth.

Positional encoding is a crucial concept in understanding transformers. Unlike traditional neural networks, transformers process all input tokens simultaneously. This creates a challenge: how does the model know the order of these tokens? Positional encoding solves this problem. It adds unique values to each token's representation, indicating their position within a sequence.

Without positional encoding, the transformer would treat every input as a bag of words, lacking context. Imagine a sentence where word order is vital, such as "The cat chased the mouse." Changing the order alters the meaning entirely. Positional encoding encodes the relationships between words. It ensures the model respects these relationships during processing. However, implementing it isn't without pitfalls. The choice of encoding method can lead to unwanted patterns. Some techniques may overly emphasize certain positions, skewing the model’s learning.

Recognizing the importance of positional encoding requires careful consideration. Simply putting values in place is not enough. The design must reflect the sequence's nature. This is a challenge that researchers continue to explore. Understanding the nuances in positional encoding is essential for improving the performance of transformer models. Each iteration offers insights, making it an area ripe for innovation.

Understanding the training process of a Transformer model hinges on backpropagation and various optimization techniques. Backpropagation, in essence, is the method used to calculate gradients. It helps in adjusting the model's parameters based on errors from predictions. This technique flows backward through the network, layer by layer. Each layer's weights are updated to minimize the loss function.

Optimization techniques are also crucial. They play a pivotal role in improving the model's performance. Common methods include Adam, SGD, and RMSprop. Using these techniques effectively can impact training speed and accuracy. For example, Adam adapts learning rates for individual parameters, leading to more efficient training. Yet, relying solely on one technique may not yield the best outcomes. Experimenting with different methods can uncover unique insights.

Training Transformers isn't straightforward. Challenges arise like overfitting where the model memorizes instead of learning. Regularization techniques help combat this, but they require careful tuning. Similarly, learning rates can make or break a training session. Too high, and the model may diverge. Too low, and it could take ages to converge. Reflecting on these aspects is crucial for mastering Transformer training processes.

Transformers have reshaped the landscape of natural language processing (NLP). Their architecture allows for efficient handling of language data. Unlike previous models, transformers utilize attention mechanisms. This feature helps in understanding context better, leading to improved results.

Applications of transformers extend beyond traditional language tasks. They excel in machine translation, making it possible to convert text between languages seamlessly. Additionally, transformers can summarize large documents effectively. This makes information consumption quicker and more accessible.

Moreover, the effectiveness of transformers isn't without challenges. Training them requires vast amounts of data and computational power. Not all datasets are well-suited for this purpose. The models may struggle with nuanced language or idiomatic expressions. As researchers delve deeper, they must confront these imperfections. Understanding and addressing these issues is crucial for the future of NLP.